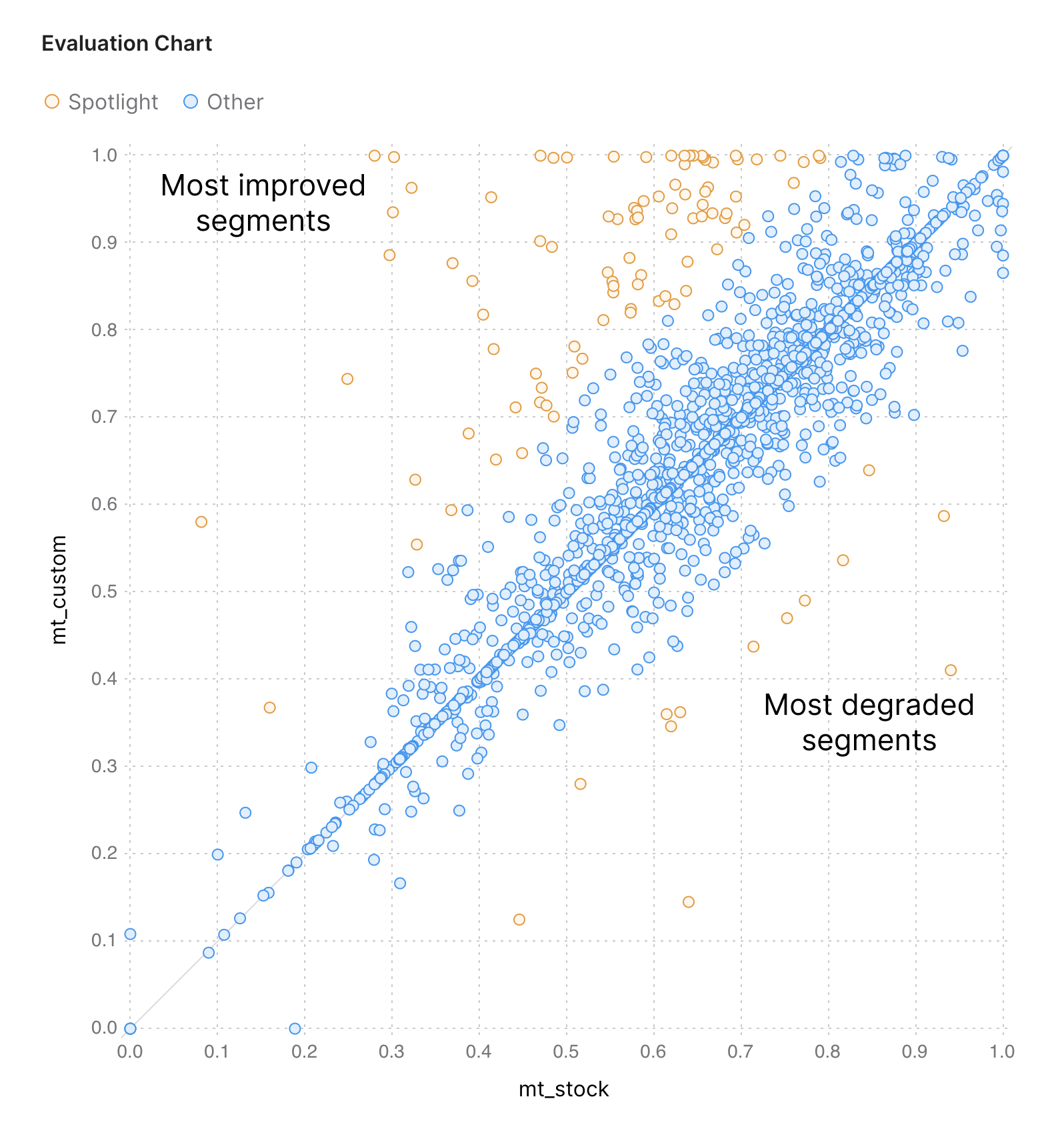

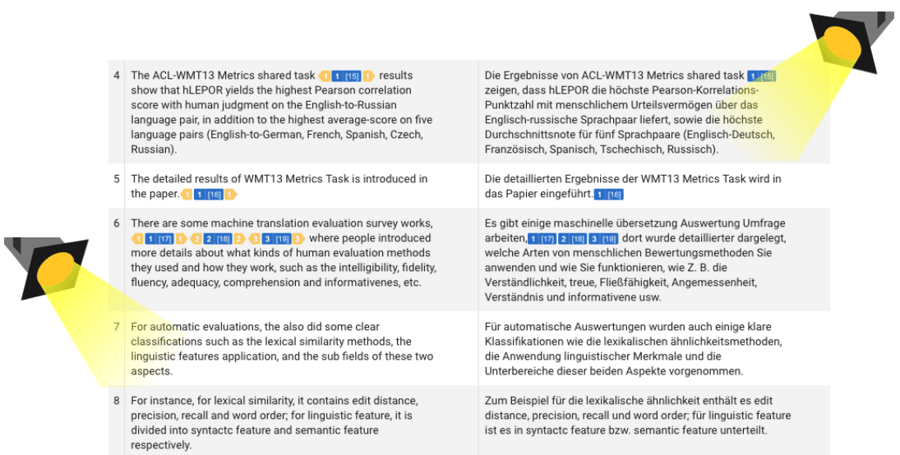

Spotlight is a tool that allows you to evaluate the results of MT training wisely, without rolling the dice of random sampling.

It is for you, if

👨💻 You train custom MT models

🧰 You want to ensure the customization yields production-level quality

💵 You often reach time or costs limits when evaluating MT

Does this process look familiar?

🔍 You use corpus-level BLEU from the MT Training Console to see if the model improved. If it didn’t improve, you can’t tell what went wrong. If it improved, you don’t know if it could improve further. There’s no easy way to find examples of improved and degraded segments to show others and prove your point.

🎲 You do random sampling to choose segments for human review. You often observe conflicting LQA results for the same model. The LQA results are not matched by what you see after rolling the model to production.

⏱️ You hire reviewers to check the test sample - the most costly and lengthy part of the process. You’d better be sure your reviewers don’t waste their time on translations that didn’t change after training.

🦕 And finally, you have your PEMT reports that estimate the ROI. However, if anything went wrong on the first step, it’s already too late because the trained model is in production, and the deadline was yesterday.

There's a better way - SPOTLIGHT

- Mistakes discovered at later stages become costlier at each step. Finding them early prevents you from starting over your MT training to make corrections while the custom model is already in production.

- We take the best practices from agile software development and apply them to MT evaluation, redesigning this process and bringing true MT curation to life.

- Spotlight is the first of Intento's tools to support agile MT curation, enabling quick analysis of the MT training results.

Watch a SPOTLIGHT presentation

SPOTLIGHT IS NOW AVAILABLE

REQUEST A DEMO